It also requires fewer Experience Points to level up compared to some of her other weapons. Using the Blazefire Saber allows her to switch easily from physical to magical attacking, without a loss in comparative attack power. When boarding the Purge train, she hands her weapon to PSICOM, but reclaims it when the Purgees take over the train as it enters the Hanging Edge.ĭespite the Blazefire Saber's lack of abilities and low attack compared to some other gunblades, its use as a balanced weapon between Strength and Magic makes it useful for Lightning, as she is balanced herself. We apologise for any inconvenience this may cause, and we thank you for your understanding. 1, 8:45 GMT) Online Store and Mog Station maintenance will take place at the following time, during which users will be unable to use the services listed below. Regardless of what weapon Lightning has equipped, pre-rendered cutscenes show her wielding the Blazefire Saber. 02:40 Maintenance Online Store / Mog Station Maintenance (Dec. It can be upgraded to the Flamberge by using the Perovskite item, and provides physical defense as its chain ability. It is her initially equipped weapon and can also be obtained from the Retail Network Up In Arms for 2,000 gil. Upon close inspection in the render present in the game cover, there's an inscription that reads "Made in Cocov(Cocoon)" in Cocoon alphabet.īlazefire Saber is a a first tier weapon available for Lightning. In Final Fantasy XIII Episode Zero -Promise-, Lieutenant Amodar says the inscription is present exclusively on Lightning's blade.

There is an inscription on the saber that reads "Invoke my name - I am Spark," written in the Pulsian alphabet. Lightning herself changes between the two on-the-fly with both forms being equally powerful, but in appearances outside of Final Fantasy XIII the weapon tends to only work as a sword. 2.8.1 Theatrhythm Final Fantasy Curtain Callīlazefire Saber is a gunblade, a weapon that can transform between sword and firearm forms.2.1.2 Lightning Returns: Final Fantasy XIII.

0 Comments

Across the country, 82 tickets matched four white balls plus the Mega Ball to win the third-tier prize. One ticket, sold in Ohio, matched the five white balls to win the game’s 1 million second-tier prize. The players win the Jackpot by matching all six winning numbers in a drawing. In the December 30 drawing alone, there were 2,776,599 winning tickets, with prizes ranging from 2 up to 1 million. Five of these balls are marked with X2, six with X3, three with X4, and one with X5. The Megaplier number choose from a pool of 15 balls. The Megaplier number multiplies the prize of a winner, who wins using Megaplier. The Megaplier number is randomly selected just before the draw. And the Megaplier options are X2, X3, X4, or X5. The players can add Megaplier to the Mega Millions by paying an additional $1. In the table further down you can see the prizes you win for matching a certain amount of these. These are the five winning numbers and Mega Ball that you need to match to win a prize for the draw on December 31st. Below you can find the numbers from the Mega Millions draw on December 31st 2010. Players can select the numbers for themselves or the lottery terminals will select the numbers randomly for the players. Mega Millions Numbers - December 31st 2010.Mega Millions Numbers for 31 December 2021 You can find the overall payout table on this page Its more than just a number picker for Mega Millions. For Millions 31st Mega Numbers 2021 December. The players need to choose six numbers from two separate pools of numbers – five different numbers from 1 to 70 for white balls and one number from 1 to 25 for the Gold Mega ball. Search: Mega Millions Numbers For December 31st 2021. Visit the Results page to view a listing of Mega Millions results from the last eight draws or go to our Past Drawings page for older winning numbers.The Mega Millions lottery ticket costs $2 per play.Tickets should be purchased one or two hours before the lottery is drawn.The interested players can purchase lottery tickets from a licensed lottery retailer.You can check the Past Winning Numbers in the last 50 draws here. If you have matched the winning numbers then you can go to the Payout page to check how much you have won. The Mega Millions lottery winning numbers were 4, 43, 46, 47, 61, 22 and Megaplier is 4X. The last draw was on January 27, 2023, and the Jackpot prize was $20 Million. Check the payouts you can get after matching all the numbers or only a few numbers. There you will see how many people won in the latest draw. To check the winners' detail, you need to go to the MegaMillions Winners page. Winning numbers for Mega Millions 1/31/23 are 7, 9, 18, 29, 39, 13 with the 4X Megaplier. The Jackpot prize is $31 Million for Mega Millions January 31, 2023. The Mega Millions Winning Numbers will be announced at 11 p.m. Winning numbers for Mega Millions 1/31/23 Tuesday, USA The Mega Millions draw takes place every Tuesday and Friday at 11 p.m. The Jackpot increases when there are no top prize winners. But many state jurisdictions do not participate in this lottery. Mega Millions is an American Multi-jurisdictional lottery game that is conducted in 45 states of the UN. This article originally appeared on : Mega Millions winning numbers for Friday, Dec.Mega Millions Lottery is one of the biggest lotteries in the United States. $515 million: May 21, 2021: Won in Pennsylvania

$522 million: June 7, 2019: Won in California $533 million: March 30, 2018: Won in New Jersey $536 million, July 8, 20116: Won in Indiana $543 million, July 24, 2018: Won in California 17, 2013: Two winners in California, Georgia $656 million, March 30, 2012: Three winners in Illinois, Kansas, Maryland

Powerball, Mega Millions: These are the luckiest states for jackpot winners Top Mega Millions jackpots

Until recently, lottery winners in New Jersey were required to be identified, but now winners will be able to stay anonymous under a new law that was signed by Gov. Powerball winner: $699.8 million Powerball winning ticket sold in California Meanwhile, the Powerball jackpot was at $500 million with a cash option of $355.9 million, according to the Powerball website. Recent Winner: $108 million Mega Millions winning ticket sold in Arizona The jackpot was an estimated $221 million with a cash option of $159.6 million, according to the Mega Millions website.

Should I be using underscores for the file name? Also, I put the image file in the layers folder that I put in the documents folder for the game.

I must be doing something wrong here.įor my script, I wrote "layer(2,Layer,)". I really appreciate the help (to everyone who has commented), though for some reason, it's just not appearing. Layer(map,layer,)ĥ: Activate the event and the picture you put in "layers" that you wanted to show above all tiles will appear on top. I'll just make steps to use that script thing.ġ: Paste the galv script into Rpg maker's script editor, under materials and above mainĢ: In the rpg maker's folders, go into the "graphics folder" and create a new folder and name it "Layers"ģ: What ever picture you want to show above all the tiles, place it in the new "layers" folderĤ: In rpg maker, Make an event and call a script with this. Is this the script? I needs to be pasted into the rpg maker script editor, and pasted under "materials" and above "main," not as a note.

It's too hard to understand things that way. Originally posted by Spider:You don't need to pay attention to everything in that video, not the brushes, nor the layers etc. In the Tutorial Video you showed, the Artist was also working with Layers. In Gimp you would create a Picture out of different Layers, and every Layer would be safed as independant picture and used as Parallax(Below Player) or Picture(Above Player) in VX-Ace. # fixes tagged pictures to the map (scrolls with the map)Įdit: Oh i believe i missread the first Topic. # put the tag in the affected picture's filename Here is a Yanfly Script that seems to be still free for use, which does the Job:Īnd another one to Fix Pictures on the Map instead of the Screen: It also needed a Parallax Scroll Fix Script. Vx-Ace was a little special, it was not enough that the Parallax needed the correct size for each mapsize. If you dont want to learn a Parallax Mapping Script to have many Layers, you could also use 2 of the simple Scripts:

Second, insert some data into the contacts table: INSERT INTO contacts language: SQL (Structured Query Language) ( sql ) The contact_id column has a default values provided by the uuid_generate_v4() function, therefore, whenever you insert new row without specifying the value for the contact_id column, PostgreSQL will call the uuid_generate_v4() function to generate the value for it.

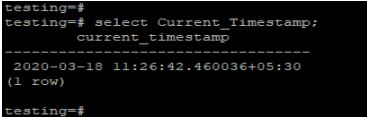

In this statement, the data type of the contact_id column is UUID. In addition, the values of the primary key column will be generated automatically using the uuid_generate_v4() function.įirst, create the contacts table using the following statement: CREATE TABLE contacts (Ĭontact_id uuid DEFAULT uuid_generate_v4 (), We will create a table whose primary key is UUID data type. For example: SELECT uuid_generate_v4() įor more information on the functions for UUID generation, check it out the uuid-ossp module documentation. If you want to generate a UUID value solely based on random numbers, you can use the uuid_generate_v4() function. The function generated the following a UUID value: uuid_generate_v1 To generate the UUID values based on the combination of computer’s MAC address, current timestamp, and a random value, you use the uuid_generate_v1() function: SELECT uuid_generate_v1() The IF NOT EXISTS clause allows you to avoid re-installing the module. To install the uuid-ossp module, you use the CREATE EXTENSION statement as follows: CREATE EXTENSION IF NOT EXISTS "uuid-ossp" For example the uuid-ossp module provides some handy functions that implement standard algorithms for generating UUIDs. Instead, it relies on the third-party modules that provide specific algorithms to generate UUIDs. PostgreSQL allows you store and compare UUID values but it does not include functions for generating the UUID values in its core. To stores UUID values in the PostgreSQL database, you use the UUID data type. The following shows some examples of the UUID values: 40e6215d-b5c6-4896-987c-f30f3678f608Ĭode language: SQL (Structured Query Language) ( sql )Īs you can see, a UUID is a sequence of 32 digits of hexadecimal digits represented in groups separated by hyphens.īecause of its uniqueness feature, you often found UUID in the distributed systems because it guarantees a better uniqueness than the SERIAL data type which generates only unique values within a single database. A UUID value is 128-bit quantity generated by an algorithm that make it unique in the known universe using the same algorithm. UUID stands for Universal Unique Identifier defined by RFC 4122 and other related standards. Summary: in this tutorial, you will learn about the PostgreSQL UUID data type and how to generate UUID values using a supplied module.   Campbell's commentaries always have the right balance of technical craft, one-liners, critique, anecdotes and personality that makes commentaries worth listening to. Bruce Campbell always makes for a great commentary track, so if you've got him, make the most of it. The scene specific commentaries were good, but there weren't enough of them. However, I hoped for more from this DVD edition in terms of extras. It has an outstanding cast, intelligent scripts (with snappy dialogue) and a great look. The series Burn Notice is one of the best shows currently on television. To learn more about how and for what purposes Amazon uses personal information (such as Amazon Store order history), please visit our Privacy Notice.Afghanistan, Albania, Algeria, Andorra, Angola, Anguilla, Antigua and Barbuda, Argentina, Armenia, Aruba, Australia, Austria, Azerbaijan Republic, Bahamas, Bahrain, Bangladesh, Belgium, Belize, Benin, Bermuda, Bhutan, Bolivia, Bosnia and Herzegovina, Botswana, Brazil, Brunei Darussalam, Bulgaria, Burkina Faso, Burundi, Cambodia, Cameroon, Canada, Cape Verde Islands, Cayman Islands, Central African Republic, Chad, Chile, China, Colombia, Costa Rica, Cyprus, Czech Republic, Côte d'Ivoire (Ivory Coast), Democratic Republic of the Congo, Denmark, Djibouti, Dominican Republic, Ecuador, Egypt, El Salvador, Equatorial Guinea, Eritrea, Estonia, Ethiopia, Fiji, Finland, France, Gabon Republic, Gambia, Georgia, Ghana, Gibraltar, Greece, Greenland, Grenada, Guatemala, Guinea, Guinea-Bissau, Guyana, Haiti, Honduras, Hong Kong, Hungary, Iceland, India, Indonesia, Ireland, Israel, Italy, Jamaica, Japan, Jordan, Kazakhstan, Kenya, Kiribati, Kuwait, Kyrgyzstan, Laos, Latvia, Lebanon, Lesotho, Liberia, Liechtenstein, Lithuania, Luxembourg, Macau, Macedonia, Madagascar, Malawi, Malaysia, Maldives, Mali, Malta, Mauritania, Mauritius, Mexico, Moldova, Monaco, Mongolia, Montenegro, Montserrat, Morocco, Mozambique, Namibia, Nauru, Nepal, Netherlands, New Zealand, Nicaragua, Niger, Nigeria, Norway, Oman, Pakistan, Panama, Papua New Guinea, Paraguay, Peru, Philippines, Poland, Portugal, Qatar, Republic of Croatia, Republic of the Congo, Romania, Rwanda, Saint Helena, Saint Kitts-Nevis, Saint Lucia, Saint Pierre and Miquelon, Saint Vincent and the Grenadines, San Marino, Saudi Arabia, Senegal, Serbia, Seychelles, Sierra Leone, Singapore, Slovakia, Slovenia, Solomon Islands, South Africa, South Korea, Spain, Sri Lanka, Suriname, Swaziland, Sweden, Switzerland, Taiwan, Tajikistan, Tanzania, Thailand, Togo, Tonga, Trinidad and Tobago, Tunisia, Turkey, Turkmenistan, Turks and Caicos Islands, Uganda, United Arab Emirates, United Kingdom, United States, Uzbekistan, Vanuatu, Vatican City State, Vietnam, Wallis and Futuna, Western Samoa, Yemen, Zambia, ZimbabweĪ great show demands great (and plentiful) extras!

You can change your choices at any time by visiting Cookie Preferences, as described in the Cookie Notice. Click ‘Customise Cookies’ to decline these cookies, make more detailed choices, or learn more. Third parties use cookies for their purposes of displaying and measuring personalised ads, generating audience insights, and developing and improving products.

This includes using first- and third-party cookies, which store or access standard device information such as a unique identifier. If you agree, we’ll also use cookies to complement your shopping experience across the Amazon stores as described in our Cookie Notice. We also use these cookies to understand how customers use our services (for example, by measuring site visits) so we can make improvements.

We use cookies and similar tools that are necessary to enable you to make purchases, to enhance your shopping experiences and to provide our services, as detailed in our Cookie Notice.

Detect Data Changesĭetect Data Changes has been used. However as a just in case 1 month has been used, in case for any reason the job is suspended or doesn’t run. If this was running every single day then you would only need to refresh rows in the last 1 day. its set to months so the partitions are smaller Refresh Rows In the Above example we are storing everything for 5 years. Go to your first table and choose incremental refreshĮxample screen shot of an Incremental refresh policy Store Rows Order date, Received Date etcĬlose and Apply Define your Incremental Refresh policy in Power BI Desktop Filter the data in the ModelĪdd your parameters to every table in your data set that requires incremental loadįind your static date. for example, a year, two years worth of data. allow yourself a good slice of the data to work with.

the recommendation is to set Incremental processing up over a relational data store.įor the desktop. Its possible to query fold over a Sharepoint list. Its not recommended to run incremental processing on data sources that cant query fold (flat files, web feeds) You do get a warning message if you cant fold the query Query Folding – RangeStart and RangeEnd will be pushed to the source system. Range Start and Range End are set in the background when you run power BI. The two parameters that need setting up for incremental loading are RangeStart, RangeEnd Go to transform data to get to the power Query Editor (You can either be in desktop or creating a dataflow in Service) Set up incremental Refresh in Power Query Editor. Can you define the Static Date within the table that will be used for the Incremental refresh?Įach of these points are very important and will establish what you need to do to set up the incremental refresh, from your data source up to power BI Desktop and Service.Which tables in your data set need incremental refresh?.How many years worth of data do you want to retain?.If rows can be updated, how far back does this go?.Are new rows simply added to the dataset in power BI?.This should have been fixed in April so here is a quick check list of how you approach incremental Refresh Define your Incremental Refresh Policy Error Resource Name and Location Name Need to Match. Paste it into the Cloud Shell prompt to run it.Incremental Refresh came available for Power BI Pro a few months ago but when tested there was am issue. Select the Cloud Shell button in the Azure portal and ensure the environment is set to PowerShell.Ĭopy the following PowerShell code and replace the Path parameter with the appropriate values for your workspace in the Invoke-AzRestMethod command. To delete a table, run the az monitor log-analytics workspace table delete command. To delete a table, call the Tables - Delete API. ) to the right of the table, select Delete, and confirm the deletion by typing yes. Select the table you want to delete, select the ellipsis (. Search for the tables you want to delete by name, or by selecting Search results in the Type field. Modify this schema to collect a different table.įrom the Log Analytics workspace menu, select Tables. This code creates a table called MyTable_CL with two columns. Use the Tables - Update PATCH API to create a custom table with the PowerShell code below. To create a custom table, run the az monitor log-analytics workspace table create command. To create a custom table, call the Tables - Create Or Update API. Verify the final details and select Create to save the custom log. Select Apply to save the transformation and view the schema of the table that's about to be created. Azure Monitor Logs stores the results of the query in the destination table. This is a KQL query that runs against each incoming record. The transformation editor lets you create a transformation for the incoming data stream. If you want to transform log data before ingestion into your table: Select Browse for files and locate the JSON file in which you defined the schema of your new table.Īll log tables in Azure Monitor Logs must have a TimeGenerated column populated with the timestamp of the logged event. Select a data collection endpoint and select Next. Select an existing data collection rule from the Data collection rule dropdown, or select Create a new data collection rule and specify the Subscription, Resource group, and Name for the new data collection rule. You don't need to add the _CL suffix to the custom table's name - this is added automatically to the name you specify in the portal. Specify a name and, optionally, a description for the table. Select Create and then New custom log (DCR-based). To create a custom table in the Azure portal:įrom the Log Analytics workspaces menu, select Tables.

Example: ReputationAddPoints GildedVale Positive 4 For axis, set positive or negative depending if you want to increase or lose reputation.

They are also sought after for their durability against high volumes of foot traffic due to their higher firing temperature during manufacture, and their high resistance to moisture allows them to be specified indoors and outdoor use for flooring and, depending on weight, for wall cladding. Porcelain tiles are composed of finely ground sand, feldspar and specific clays and are renowned for their ability to resist water ingress due to their higher density in comparison with ceramic and other forms of tiles, which helps with their ability to repel liquids to prevent staining, scrapes and marks while being low maintenance after installation. The pinstripe effect can be achieved with a grain in the mould within which it is fired, or scraping the exposed surface by hand before firing, blending neutrally in tone and texture with a variety of colours, materials and textures on neighbouring surfaces. The rough, fine concrete-like aggregate grain is ideal for wet spaces to increase grip and slip resistance. The joints are filled with coarse cement mortar and are 5 mm (0.2 inches) in width.Ī fine concrete, neutral, grey-white porcelain tile with a rough, strongly linear grain creating a pinstripe look due to the shadows in the troughs of the grooves, commonly used in bathrooms, kitchens and other high footfall, frequently wet areas of domestic and commercial environments. The image represents a physical area of 1350 x 1350 mm (53.1 x 53.1 inches) in total, with each individual tile measuring approximately 40 x 40 mm. Thank you for supporting our free material resource! Enjoy what other users have already created for you.A seamless tile texture with pinstripe tile arranged in a stack pattern. We will never sell or further distribute your creations except via download on our website. By submitting your material to us you agree that it is free to use, private and commercially for everyone. We must not publish textures or data that is protected by copyright. Library file to this email address: Please be 100% sure that you own the rights on the created material and texture files. Send everything including all texture files, the sample render image and either the max-file or the.OR save the max file using the same name.Save your material as a single library, give it the same name.dark grey concrete, fine red leather, brushed steel) Save your render with a suitable name that represents your material (e.g.Render the image with the preset settings.Adjust the material if necessary (for lighting and stuff).Load the scene and apply your material to the sample object.Download our Sample scene for Vray and 3DSMax here sample scene.If you want to contribute to this site too just follow these simple steps: Them to our site to help and inspire others. People from around the world put there knowledge and love into their materials and uploaded This site wouldn't be such a great vray material resource without the help of our community! We encrypted the passwords in md5 + and Upload He sent me the whole dump - email, password hashed and in clear text. 09:30: I opened an E-Mail about a potential data breach, including samples (email and hashed passwords), got in contact with the sender to proof the data.10:05: Website has been taken offline to prevent further access.10:17: Changing SSH- and Database passwords again (134bits, as usual).11:35: Reporting to the local data protection authority ().19:00: Still analysing the data, comparing users and timestamps, also hoster prepared the e-mail server for sending out the information mail.Information-Mail goes out to all records from the dump, soon. Last email entry has "" as registration date.

A row-based database would store the data in Table 1 as: This is ideal for use cases that involve querying and updating specific rows, such as in CRM and ERP applications. Traditional relational databases use row-based storage. Let’s look into several Redshift design features that have changed the way we gain business insights. Moreover, AWS focuses on continuous innovation of the platform by adding newer features and offering product extensions. The key reason for Redshift’s emergence as one of the most popular cloud data warehousing solutions is its underlying architectural elements. Given that Redshift is a cost-effective, reliable, scalable, and fast performing solution, companies are naturally gravitating towards the option of data-warehouse-as-a-service (DWaaS). The platform also performs a continuous backup of data, eliminating the risk of losing data or need to plan for backup hardware. Complex tasks such as data encryption are easily managed easily through Redshift’s built-in security features. Additionally, the scalable architecture of Redshift allows companies to place a dynamic request to scale infrastructure up or down as requirements change.Īs the server clusters are fully managed by AWS, Redshift eliminates the hassle of routine database administration tasks. Data ingestion into Redshift is performed by issuing a simple COPY command from Amazon S3 (Simple Storage Service), or DynamoDB. It takes just minutes to create a cluster from the AWS console. Redshift’s cloud-based solution helps enterprises overcome these issues. Second, after a few months or years, data size invariably tended to increase, meaning companies needed to choose between investing in new hardware or tolerating slow performance. This factor required firm budgetary and strategic commitment from leadership.

First, on-premise warehouses are expensive and take months to get running. Traditionally, enterprises have encountered several challenges while setting up data warehouses. How Redshift scores over traditional data warehouses

Over the past five years, Redshift has emerged as one of the leading cloud solutions through its unparallelled ability to provide organizations with business intelligence. The solution has quickly become an integral part of the big data analytics landscape through its ability to perform SQL-based queries on large databases containing a mix of structured, unstructured, and unstructured data. In 2012, Amazon invested in the data warehouse vendor, ParAccel (now acquired by Actian) and leveraged its parallel processing technology in Redshift. Redshift delivers incredibly fast performance using two key architectural elements: columnar data storage and massively parallel processing design. Based on PostgreSQL, the platform integrates with most third-party applications by applying its ODBC and JDBC drivers. What is AWS Redshift?ĪWS Redshift is a cloud-based petabyte-scale data warehouse service offered as one of Amazon’s ecosystem of data solutions. In this article, we explore the world of Redshift, its powerful features, and why so many companies are choosing Redshift for data storage and analytics. From unbeatable performance to unlimited scalability, the number of enterprise customers using Redshift is increasing by the day. One of Amazon’s flagship products, AWS Redshift’s cloud-based data warehouse service is an industry gamechanger. AWS Redshift is a major driver of that growth. It’s estimated that by 2020, Amazon Web Services (AWS) will register revenues of $44 billion, twice the combined revenue of its two key cloud competitors: Google Cloud and Microsoft Azure. Over the past 12 years, Amazon’s cloud ecosystem has experienced astounding growth. The Complete Guide to Selecting Cloud Storage for Your Business.Stitch Fully-managed data pipeline for analytics.Talend Data Fabric The unified platform for reliable, accessible data.  |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed